Representative program design — this case study illustrates the type of engagement Shailka-Robotics is built to deliver, not a completed project.

Situation

Consider a consumer electronics manufacturer operating four high-volume SMT (surface mount technology) lines that needs to modernize its quality inspection program. Manual visual inspection at end-of-line stations catches only 82% of cosmetic defects, and the inspection bottleneck limits line speed. The quality team has attempted prior ML-based inspection pilots that failed to reach production due to insufficient training data diversity and brittle model performance across product variants.

Key challenges:

- Training data scarcity: The defect rate was approximately 0.3%, meaning that collecting a balanced dataset of defective samples required months of production logging. Rare defect types (hairline cracks, solder bridge micro-shorts, connector pin misalignment) had fewer than 50 labeled examples each

- Product variant complexity: The lines produced 14 distinct board variants with different component placements, colors, and form factors. Models trained on one variant performed poorly on others without extensive re-annotation

- Operator workflow friction: Previous pilots generated alerts that operators found unreliable (high false positive rate), leading to alert fatigue and eventual system bypass

- Infrastructure fragmentation: Camera feeds, inference servers, and alert dashboards ran on disconnected systems with no unified pipeline for model updates, performance monitoring, or feedback loops

Technical Architecture

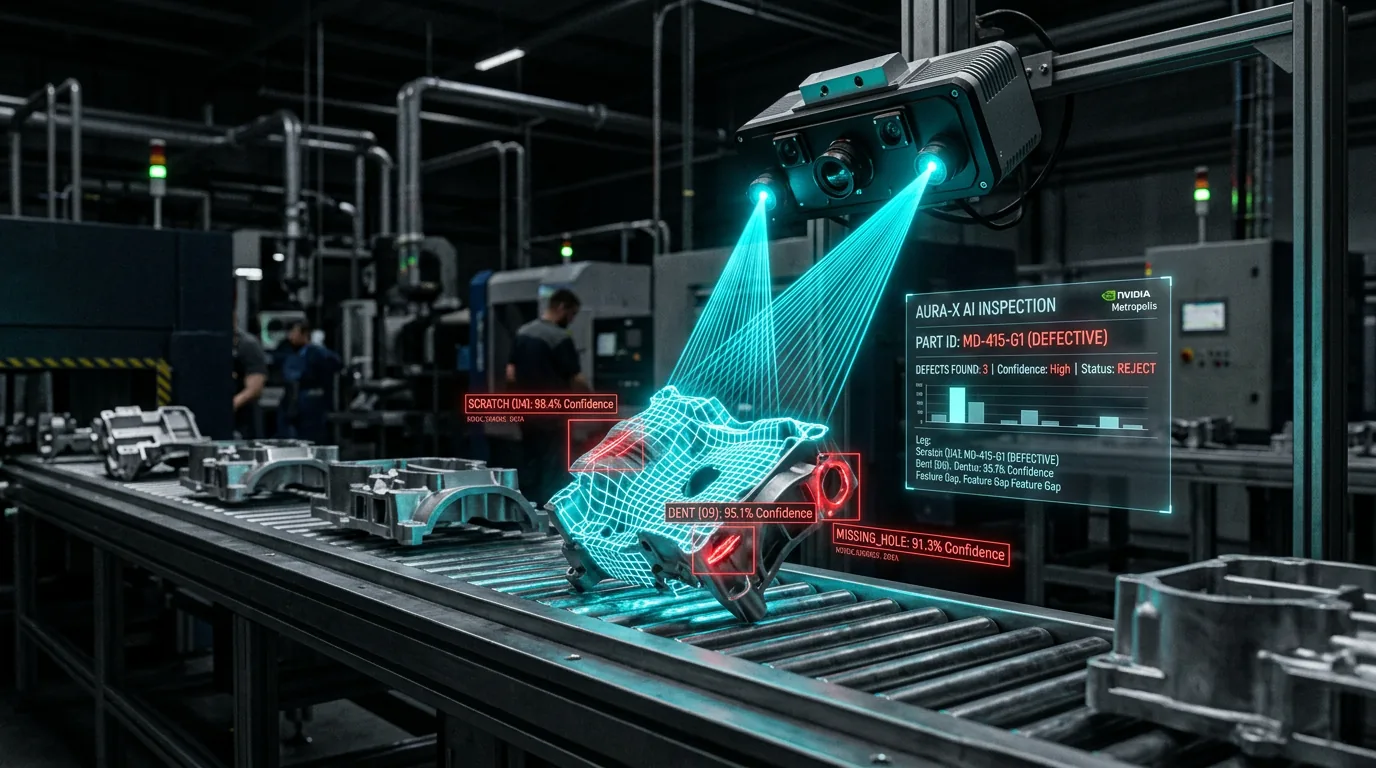

This program design specifies an integrated vision analytics pipeline with four layers:

Synthetic Data Generation (Replicator) NVIDIA Replicator generates photorealistic synthetic inspection images across all 14 board variants. Domain randomization is applied to lighting angle, background texture, component placement jitter, and defect morphology. The pipeline targets 180,000+ synthetic images in the first batch, covering 23 defect categories including edge cases with fewer than 50 real-world examples.

Model Training (TAO Toolkit) Transfer learning pipelines are configured using NVIDIA TAO with a pre-trained backbone (ResNet-50). The training loop mixes synthetic data (70%) with curated real-world samples (30%), using a balanced sampling strategy to prevent class imbalance. Automated model evaluation measures precision, recall, and F1 per defect category against a held-out validation set of manually verified production images.

Inference Pipeline (DeepStream + Metropolis) NVIDIA DeepStream is deployed for real-time multi-stream video analytics, processing feeds from 8 inspection cameras across the four lines. DeepStream handles frame decoding, model inference, and result aggregation. The Metropolis framework provides the application layer for alert routing, zone-based configuration, and integration with the factory's existing MES (manufacturing execution system).

Operator Feedback Loop An operator review dashboard provides three tiers: automated pass (high-confidence OK), automated reject (high-confidence defect), and human review (low-confidence zone). Operator corrections in the human review tier are automatically logged and fed back into the next training cycle, creating a continuous improvement loop.

Implementation Timeline

| Phase | Duration | Deliverable | |---|---|---| | Synthetic data generation and curation | 3 weeks | 180,000+ annotated synthetic images | | Model training and evaluation | 4 weeks | Production-ready defect detection model | | DeepStream pipeline deployment | 3 weeks | Multi-camera real-time inference | | Operator dashboard and feedback loop | 2 weeks | Three-tier review workflow |

Projected Impact

- 70% reduction in manual inspection effort targeted -- operators review only the low-confidence tier (approximately 8% of total volume)

- 97%+ detection rate targeted across all 23 defect categories, up from 82% with manual inspection

- False positive rate below 2% targeted -- within operator tolerance thresholds, eliminating alert fatigue

- 14 product variants covered by a single model with variant-aware inference

- Training cycle time of 4 hours for model updates incorporating new operator feedback data

Expected Outcome

A manufacturer following this program would achieve production-grade defect detection across all four SMT lines. The synthetic data pipeline eliminates the training data bottleneck that blocks conventional ML inspection pilots. The operator feedback loop ensures the model improves with each production cycle. Line speed improvements are expected as the inspection bottleneck is removed, and quality teams can track per-variant detection metrics in real time through the Metropolis dashboard.